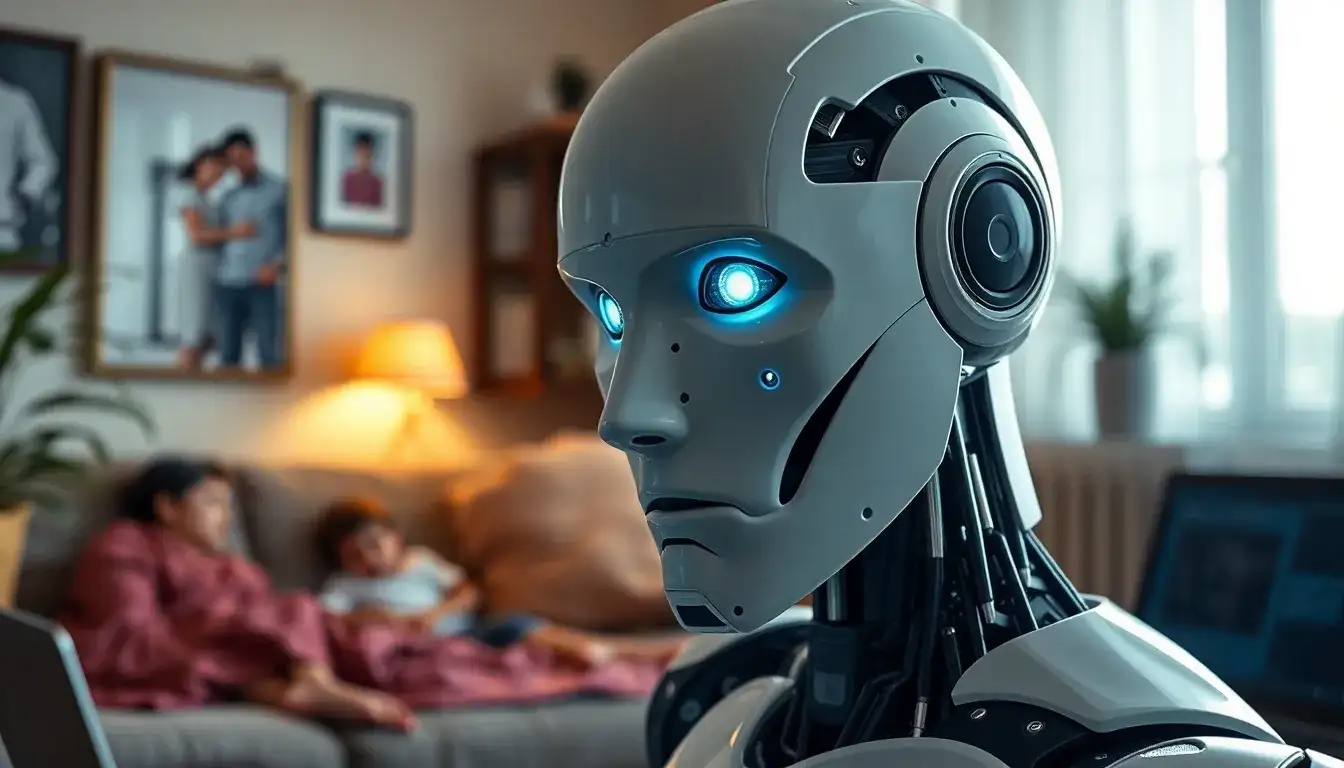

Less than 8 hours after deployment, a robot was “hijacked,” raising concerns that family life could be “live-streamed.” This incident highlights a crucial issue in the robotics industry: as robots become increasingly agile and intelligent, how do we reinforce safety measures for their autonomous actions?

On April 20, the independent security research organization DARKNAVY, in collaboration with the CIIPA Critical Information Infrastructure Security Protection Alliance, released the “Embodied Intelligence Security Technology White Paper: Robot Edition” (hereinafter referred to as the “White Paper”). This document outlines the security vulnerabilities of embodied intelligent robots based on real-world attack scenarios.

The White Paper states that while a state-of-the-art smartphone may take months for professional cybersecurity teams to fully breach, the same team was able to penetrate a well-known brand of embodied intelligent robot in less than 8 hours, from vulnerability identification to complete remote takeover.

Currently, the embodied intelligence industry is on the brink of large-scale deployment. In 2025, the global market is expected to reach $4.44 billion, with humanoid robot shipments surpassing 13,000 units, and projections indicate over 2.6 million units will be deployed by 2035. The rapid evolution of technology is outpacing the development of secure systems, plunging the industry into a precarious security vacuum.

Robots Can “Turn” Against Us

Commands such as “turn left” or “take two steps forward” were flawlessly executed by two humanoid robots. However, within minutes, one of the connected robots was compromised by an attacker, leading the other unconnected robot to be hijacked nearby, demonstrating a chilling potential for control. This incident at the GEEKCON2025 Shanghai cybersecurity competition reveals how vulnerabilities in open environments can trigger chain reactions, jeopardizing public safety.

Unlike past cybersecurity issues, which primarily affected digital spaces with consequences such as data breaches and service interruptions, the vulnerabilities in embodied intelligent robots can translate directly into physical harm when they are attacked or hijacked. According to the White Paper, the security capabilities of mainstream domestic robots have not even reached the basic protective levels of early smart terminals or IoT devices.

Security researcher Qu Shipai disclosed that during controlled environment tests, many popular robotic products exhibited significant security flaws. For example, some robotic dogs were shipped with fixed and unmodifiable hotspot passwords, allowing anyone to connect and take control of the device. Other robots showed apparent weaknesses in cloud permission management, enabling attackers to remotely access camera feeds and manipulate robotic arms to perform dangerous actions.

In recent years, multiple public security studies have confirmed that quadrupedal robots and service robots can be hijacked remotely, leading to physical disruptions and damage. Many industry professionals believe that the current domestic embodied intelligence sector is developing with an emphasis on functionality over safety. Yuan Shuai, deputy director of the Investment Department at the China Urban Development Research Institute, noted that many companies are neglecting “non-functional” safety issues, focusing primarily on enhancing robots’ mobility, interaction, and task efficiency, while assuming all operational environments are ideal.

Family Life at Risk of “Live Broadcasting”

The complete risk transmission chain of robots can be summarized as “perception—decision—execution.” A carefully crafted voice command, a wireless signal injection, or a cloud interface vulnerability can hijack the decision-making process, leading to potential loss of control, collisions, and bodily harm. The White Paper published a list of the top ten critical risks, addressing permissions, cloud communication, control logic, perception deception, and AI asset integrity, highlighting widespread security issues.

In instances where vulnerabilities exist, attackers can remotely access others’ devices, effectively “live-streaming” users’ private lives. Qu explained that most modern robots come equipped with cameras for environmental perception and motion decision-making, and if there are permission management flaws in the cloud control, attackers can gain unauthorized access to view and steal real-time camera feeds.

Moreover, the “loss of AI asset integrity” poses severe risks. Many robots connect to cloud-based large models for enhanced intelligence, with user voice commands processed on servers before execution. However, researchers found that some manufacturers did not adequately verify the authenticity of their cloud interfaces. Attackers within the same local network could manipulate the connection from the official model interface to a malicious address, causing the robot to send commands to the attacker instead of the legitimate server. If the robot’s internal logic is flawed, malicious commands could trigger vulnerabilities and execute dangerous actions.

The White Paper categorizes the impacts of embodied intelligence security from levels L1 to L5, with risks escalating from information leakage to personal harm. Any flaw leading to security failures is classified as a high-risk L4-L5 vulnerability. For example, a model could be implanted to execute dangerous actions in specific scenarios, triggering abnormal behavior upon recognizing certain cues, constituting a level L5 threat.

“Just Being Functional Is Not Enough”

In the industrial sector, standards such as ISO 10218 are well-established, leading to relatively robust safety measures for collaborative robots, including force control and safety barriers. However, in the field of embodied intelligence, particularly for humanoid robots, safety advancements lag significantly behind functional iterations.

According to Chen Yanyi, Chief Strategy Officer at Guangzhou Siyi Cultural Company, while traditional industrial robot manufacturers excel in functional safety (SIL/PL levels), consumer-grade and research-grade products show severe deficiencies. The industry tends to prioritize demonstrations over safety, equating “being functional” with “being marketable.”

Some leading companies have begun establishing safety emergency response centers and recruiting specialized safety personnel, while many others remain in a state of security capability deficiency. Qu noted that numerous companies lack dedicated robot safety teams, with security personnel only managing internal IT systems without addressing the protection of the robots themselves. There is often a lack of comprehensive safety testing before products are launched, leading to slow responses to vulnerabilities.

The safety capabilities of embodied intelligence enterprises have yet to become a universally recognized standard within the industry, while leading overseas manufacturers deeply integrate safety features into their products. In 2024, Boston Dynamics released a dedicated safety white paper outlining safety design goals, architecture, and protective measures, embedding encryption communication, permission segregation, and safety state monitoring into their products.

A robot that can run a marathon but may suddenly fall is far less desirable than a slower robot that never tumbles. Chen stated, “Safety is not the opposite of functionality; it is a part of it. Functions without safety assurances cannot pass procurement audits in B2B scenarios or establish trust in B2C contexts. Public expectations for robots to ‘not fall’ far exceed their desire for them to ‘run fast.’

The Three Safety Tenets for Robots

What constitutes a truly safe embodied intelligent robot? The White Paper asserts that a genuinely safe robot must adhere to three unwavering principles: resilience against attacks, physical safety, and privacy protection. Robots should effectively withstand malicious attacks from network, near-field, or physical interfaces. Furthermore, even in cases of software failure, perception errors, or partial intrusions, they must ensure the ultimate protection against harm to persons and the environment. Additionally, data collected by robots, such as audio and video, spatial maps, behavioral trajectories, and user interaction data, must follow the principle of data minimization, with capabilities for encrypted transmission, storage isolation, and access auditing.

In September 2025, the international security research organization Alias Robotics and overseas security researchers disclosed a high-risk Bluetooth BLE vulnerability known as UniPwn in the Yushutech Go2/B2 quadrupedal robot and the G1/H1 humanoid robot, which could potentially lead to physical risks. Yushutech was forced to urgently release firmware fixes and updates, establishing a security team and incurring significant costs for research and development rework, offline recalls, brand crises, and customer compensation.

Chen emphasized that the costs of repair far exceed those of prevention. Companies should prioritize security from the first day of design, establishing dedicated security teams to implement comprehensive defenses from chip to cloud. They should employ hardware-level protections, such as secure boot and trusted execution environments, to avoid static keys and outdated middleware, and create vulnerability bounty programs and rapid response mechanisms, transforming external researchers from “adversaries” into “allies.” Most importantly, products must undergo dual certification for functional safety and cybersecurity by third-party evaluators before market release.

Industry experts predict that the next 3 to 5 years will be a crucial window for the industry’s security capabilities to mature. Security is shifting from an optional investment to a fundamental requirement, transitioning from post-attack fixes to core design elements. By 2028, leading embodied intelligence manufacturers are expected to establish Chief Security Officer roles, with security positions increasing from under 2% to over 10% of the workforce.

As the Humanoid Robot and Embodied Intelligence Standard System is implemented, CR certification will become a market entry prerequisite. The rise of “security as a service” will standardize third-party security audits and algorithm impact assessments, and AI-based intrusion detection and behavioral anomaly monitoring will be embedded in robotic operating systems. This will facilitate a shift from “repairing after an attack” to “predicting before an attack.”

Original article by NenPower, If reposted, please credit the source: https://nenpower.com/blog/vulnerabilities-in-humanoid-robots-raise-concerns-over-personal-privacy-and-safety/